In revisiting HBR’s challenging 2016 article ‘The Great Training Robbery’, Cranfield University experts Wendy Shepherd and Mark Threlfall reveal seven bad practices in executive development that stifle impact—and five key drivers for improving it.

"By investing in training that is not likely to yield a good return, senior executives and their HR professionals are complicit in what we have come to call the ‘great training robbery.’”— Harvard Business Review, 2016.

In their incendiary 2016 HBR article, Michael Beer, Magnus Finnstrom, and Derek Schrader, decried the lack of real impact and ROI provided by large swathes of executive education provision. It was a vital, discomfiting read, and the implications are as important today as they were then.

Five years on, what are providers and clients doing to improve the impact of their interventions? How has the industry evolved to confront the issue of impact—and are we in a better place now, than we were? As buyers of executive development in the corporate world, what steps should we be taking to ensure real return on our investment, and what common missteps should we be wary of?

The post-pandemic, ‘build back better’ imperative—to reset agendas and welcome progressive ideas, across so many sectors of business and society, offers an opportunity for all sides of the executive education industry to revisit its thinking and practice around impact.

This was the theme of a recent webinar hosted by IEDP, in which experts from Cranfield University School of Management set about these important questions; debunking bad practices, highlighting common value destroyers in executive education, and providing a framework of five key drivers to supercharge impact.

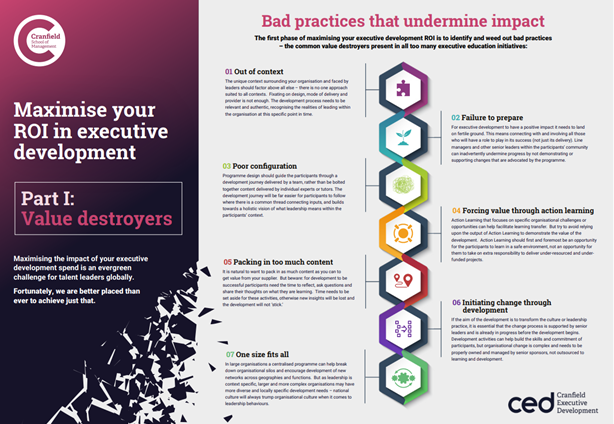

First: the bad practices—the value destroyers that too often hamper and derail even the best-intentioned learning initiatives. Mark Threlfall, Director of Executive Development at Cranfield, highlights the key issues to be aware of.

Bad practices that undermine impact

1. Context trumps design and delivery

Fixating on the design, mode of delivery, and the provider—important areas of focus—at the expense of truly understanding the context into which a program will be delivered, can lead to failure. An initial understanding of the learners’ situation, environment, and organisational context is crucial.

2. Failure to prepare the learning landscape

For an executive development intervention to deliver impact it needs to land on fertile ground. It needs to connect to the whole organisational ecosystem into which it is set. This takes preparation, and the clear communication of roles, responsibilities, and expectations for all stakeholders, to win buy-in and engagement.

3. Configure to context

The commissioning agent and the provider should ensure a receptive landing ground before configuring the program to suit it. The program configuration should be context-led rather than content-led. All too often the opposite is true.

4. Action learning projects as substitute for real impact

Action or enquiry-based learning, through cycles of doing and reflecting, is widely used in executive education. To have real impact it is important to clearly establish if such interventions are vehicles for learning (which is fine) or quasi-consulting engagements—in which case the client needs to build this aspect into the learners’ day-to-day tasks.

5. Content driven vs process driven interventions

The pace of a program can be dictated by the inclusion of too much content. It is important to be sensitive to the ‘learning journey,’ and pace interventions to allow for reflection and assimilation.

6. Alignment and engagement of key stakeholders

Organisational impact from learning interventions very much depends on the commitment and buy-in of the learners’ supervisors and superiors—the senior executive team and the relevant line managers. Planning and communications should keep this aim front of mind at all times.

7. Distinguish between learning interventions and change interventions

Executive training and skills development can be important as part of managing a change process. However, change involves much more than learning alone and it is a mistake to overburden learning interventions with expectations they cannot deliver.

Beyond these systemic issues, some more basic derailers can also hamper or dilute impact. For example, the lack of quality and relevance in program content—something providers should proactively call out. Or the lack of learner support. Or a failure to provide the psychological safety that allows learners to try new things and give feedback to the organization.

Better ways to measure impact

Dr Wendy Shepherd, Director of Individual and Organisational Impact at Cranfield, has a passionate belief in the value of executive development, founded on her own extensive personal experience. Shepherd is clear that measuring how impact occurs at an organisational level is a complex task, but certainly not an impossible one. Shepherd’s own research into learning interventions in large organizations highlights a range of effective ways to measure ROI.

Dr Shepherd explains that using financial measures alone to track impact is insufficient. Any financial upturn coinciding with a learning intervention is unlikely to be solely due to the learning. Good work done in other parts of the organization will have played a part.

To look beyond financial measures and ensure vital learning and change capabilities are being measured and captured, Shepherd has developed a framework of five key mechanisms that her research shows to demonstrably link learning interventions with organisational level outcomes.

Five key drivers of impact in executive development

1. Conversations

The quality, nature and content of conversations are vital to achieving positive outcomes at the organisational level. Are you having better conversations as a result of your learning interventions?

2. Sensemaking

Learning interventions can lead to changes in the way learners think about problems. A logical mindset might be broadened by exposure to ideas around emotional intelligence, for example. Changes in critical thinking impacts performance.

3. Alignment

Changes in priorities and better alignment with organisational requirements.

4. Engagement

Changes in discretionary effort and commitment.

5. Relationships

Changes in networks and relationships as a result of learning interventions.

By focusing on these impact drivers, it is possible to gather data that can lead to a greater understanding of what works, for whom, and within specific contexts. This in turn will present opportunities for clients of executive education to work with providers and program designers to develop interventions that can be more accurately measured in terms of impact and ROI.

Five years on from HBR’s ‘Great Training Robbery’ article, impact—and oftentimes a misalignment in the measurement of it—remains a crucial issue for the sector to tackle. The good news is that, through thoughtful research, design and best practice, the top University-based executive development providers today are far better attuned to the precise needs of their clients, and based on some of the ideas presented here, are better able to plot, measure, and demonstrate real impact from the learning interventions they offer.

Contributors

Dr Wendy Shepherd, Director of Individual and Organisational Impact, Cranfield Executive Development,

Mark Threlfall, Director, Cranfield Executive Development